I’ve been trying to fit a Tweedie likelihood model in Stan that allows for inflated zeroes. In case it is useful to anyone, I have reproduced what I have done here. I think the efficiency of the code could probably be improved, but otherwise it appears to work.

I have implemented the R “tweedie” package functions (Dunn 2017) which work, but are quite slow. The strategy is to split the data into 3 groups: i) y==0, ii) low y (dependent variable) values for which the series sum can be used and iii) higher y values which use a inverse Fourier approximation. This just follows what the R “tweedie” package density function does. The Tweedie density is parameterised with a mean (mu), dispersion (phi) and index ( p ) parameters. My approach has been to allow the index parameter p to be fitted. If this parameter is fixed, the function could be made much more efficient, but I was interested in taking into account the uncertainty associated with this parameter. The parameter controls the form of the function, so calculation methods also vary dependent on this parameter’s range. I don’t think this will be a problem in practice as the estimate of p should not be varying enormously, although there could be a problem if close to a boundary for the inverse Fourier method. In this latter case, a constant matrix grid is supplied to the function and you need to know the range of p to use the correct one. Switching between grids could affect the differentiability of the function at the boundaries. In my case, I bounded p within the appropriate range for 1.5-2.0 and this worked fine as p did not move outside 1.65-1.7. Parameters p and phi seemed well-behaved in the MCMC.

There are three log probability density functions for each of: zero y values, the series approximation and the inverse Fourier approximation.

real cpgz_lpdf(vector lmu, real phi, real p) {

//Compound Poisson-gamma (Tweedie) log density for y==0

//Returns the sum of log-likelihood for zeroes based on Tweedie

// parameters exp(lmu), phi and p

int N = rows(lmu);

real p2 = 2.0 - p;

real lscale = 1.0/(phi * p2);

vector[N] lambda = exp(lmu*p2)*lscale;

return -sum(lambda);

} //cpgz_lpdf

real cpgs_lpdf(vector ly, vector lmu, real phi, real p, vector jlohi, vector lgam_jlohi) {

//Returns compound Poisson-gamma (Tweedie) log-density for series calculation y>0

//ly is the log dependent variable vector. Some values are pre-calculated for speed:

// jlohi is the vector sequence of integers Nmin:Nmax for the series sum

// and lgam_jlohi=lgamma(jlohi + 1).

//Only works for 1 < p < 2: No check is made that p is in range

int N = rows(ly);

int Nj = rows(jlohi);

real p1 = 1.0 - p;

real p2 = 2.0 - p;

real a = (2.0 - p)/(1.0 - p);

real a1 = 1.0 - a;

real r_p1phi = 1.0/(p1 * phi);

real r_p2phi = 1.0/(p2 * phi);

real rconst = a * log(-p1) - a1 * log(phi) - log(p2);

vector[N] r = -a * ly + rconst;

vector[N] lambda = r_p2phi * exp(p2*lmu);

vector[N] r_tau = r_p1phi * exp(ly + p1*lmu);

vector[N] logw;

vector[Nj] lGj = lgam_jlohi + lgamma(-a * jlohi);

for (i in 1:N)

logw[i] = log_sum_exp(r[i]*jlohi - lGj);

return sum(r_tau - lambda - ly + logw);

} //cpgs_lpdf

real cpgf_lpdf(vector ly, vector lmu, real phi, real p, matrix grid) {

//Compound Poisson Gamma (Tweedie) log density using

//Fourier Inversion method of Dunn and Smythe 2008 for y>0

// 1.5 < p < 2.0 - dependent on pre-calculated grid matrix.

// Different grids cover different ranges of p. No check is made that p is in range

//ly and lmu are log values

int N = rows(ly);

int nx = rows(grid); //nx=26

int np = cols(grid); //np=16

real ln_sqrt_2pi = 0.5*log(2 * pi()); //constant could be dropped

real p1 = 1 - p;

real p2 = 2 - p;

real pmid = (1.5 + 2.0)*0.5;

real prange = 2.0 - 1.5;

real xixrange = 0.9;

real xixmid = 0.9*0.5;

vector[nx] jt = cumulative_sum(rep_vector(1, nx)) - 1; //0:nx-1

vector[nx] ts = 0.5*cos((2 * jt + 1)/(2.0 * nx ) * pi())* xixrange + xixmid;

vector[np] jp = cumulative_sum(rep_vector(1, np)) - 1; //0:np-1

vector[np] ps = 0.5*cos((2 * jp + 1)/(2.0 * np ) * pi()) * prange + pmid;

vector[nx] dd1;

vector[N] lxi = -ly * p2 + log(phi);

vector[N] xi = exp(lxi);

vector[N] xix = xi ./ (1.0 + xi);

vector[N] rho;

vector[N] dev;

vector[N] ll;

for (i in 1:nx) {

dd1[i] = grid[i, np];

for (j in 1:(np-1)) {

int k = np-j;

dd1[i] = dd1[i] * (p - ps[k]) + grid[i, k];

}

}

rho = rep_vector(dd1[nx], N);

for (i in 1:(nx-1)) {

int k = nx-i;

rho = rho .* (xix - ts[k]) + dd1[k];

}

dev = exp(ly*p2) - p2*exp(ly + lmu*p1) + p1*exp(lmu*p2); //deviance

ll = -dev/(p1 * p2 * phi) - ly - ln_sqrt_2pi - 0.5*lxi + log(rho);

return sum(ll);

} //cpgf_lpdf

The dependent variable y is used to determine which method is used. It is index-sorted to provide effectively three vectors, one for each function. Choosing between the series sum and inverse Fourier is a little more complicated as the break-point is determined by phi and p, so a function is used to calculate that point and adjust where the switch occurs each iteration.

real ly_series_break(real phi, real p) {

//returns log(y) benchmark for switching between series and Fourier inversion methods

return -log(0.9/(0.1*phi))/(2.0-p);

} //ly_series_break

Finally, whereas the number of calculations in the inverse Fourier estimate of the density is fixed, the series sum terms vary dependent on the precision required. In my case, the lower bound for the series sum was always 1, so I didn’t bother calculating it. This is the function for the upper bound which was calculated once on each interation:

int calc_jhi(real phi, real p) {

//Calculates the upper bound of series terms to reach the required precision

//Here the reference level to switch y=(0.9/(0.1*phi))^(-1/(2-p)) relevant for 1.5 < p < 2.0

// as used by Dunn 2017: Code ported from R tweedie package

real drop = 35; //Required precision (log)

real p2 = (2 - p);

real a = p2/(1 - p);

real a1 = 1 - a;

real ly = -log(9/phi)/p2;

real lp2 = log(p2);

real j_max = 1 / (9*p2);

real cc = a * (lp2-ly) - a1 * log(phi) - lp2 + a1;

real wmax = a1 * j_max - drop;

real estlogw = a1 * j_max;

real rj = 1;

int j = 1;

while (estlogw > wmax) {

j += 2;

rj += 2;

estlogw = rj * (cc - a1 * log(rj));

}

return j;

} //calc_jhi

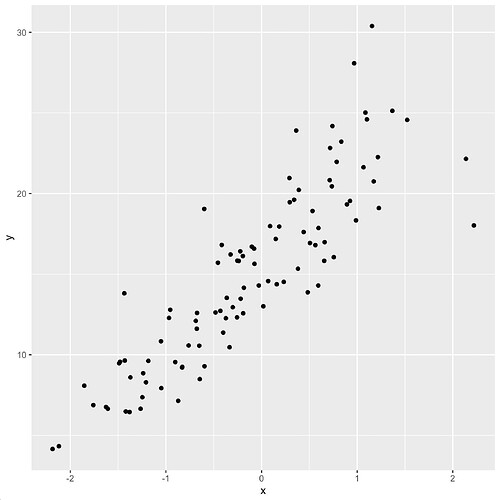

The link (hopefully) provides a simple RStudio project with a fixed mu, demonstrating a practical example:

Any comments, corrections and improvements most welcome.

References etc.

Dunn, P. K. (2017). Tweedie: Evaluation of Tweedie exponential family models. R package version 2.3.

which I believe is mostly based on:

Dunn, P. K. and Smyth, G. K. (2005). Series evaluation of Tweedie exponential dispersion models. Statistics and Computing, 15(4), 267-280.

Dunn, P. K. and Smyth, G. K. (2008). Evaluation of Tweedie exponential dispersion models using Fourier inversion. Statistics and Computing, 18(1), 73-86.

Thanks also for some early pointers from Bob Carpenter.